SQL dumps are great for databases but useless for APIs, NoSQL, and JavaScript applications. Converting INSERT statements to JSON preserves data types, handles multi-row inserts, and makes your data portable across platforms—from MySQL to MongoDB, PostgreSQL to REST APIs.

Why Convert SQL to JSON?

Common Use Cases

- API Development: Seed data for REST/GraphQL APIs

- NoSQL Migration: Migrate MySQL → MongoDB

- Frontend Development: Mock data for React/Vue/Angular

- Data Analysis: Import SQL dumps into Jupyter/Python

- Testing: Generate test fixtures from production data

- Cross-platform sync: Move data between different databases

SQL INSERT Basics (What We're Converting)

Single-Row INSERT

INSERT INTO users (id, name, email, age, active)

VALUES (1, 'John Doe', 'john@example.com', 30, true);Converts to:

[

{

"id": 1,

"name": "John Doe",

"email": "john@example.com",

"age": 30,

"active": true

}

]Multi-Row INSERT

INSERT INTO users (id, name, email, age, active)

VALUES

(1, 'John Doe', 'john@example.com', 30, true),

(2, 'Jane Smith', 'jane@example.com', 25, false),

(3, 'Bob Johnson', NULL, NULL, true);Converts to:

[

{

"id": 1,

"name": "John Doe",

"email": "john@example.com",

"age": 30,

"active": true

},

{

"id": 2,

"name": "Jane Smith",

"email": "jane@example.com",

"age": 25,

"active": false

},

{

"id": 3,

"name": "Bob Johnson",

"email": null,

"age": null,

"active": true

}

]The Critical Challenge: Type Preservation

SQL has explicit types; JSON has strings, numbers, booleans, null, and objects/arrays. Proper conversion must preserve types:

| SQL Value | Wrong JSON | Correct JSON |

|---|---|---|

NULL |

"NULL" ❌ |

null ✅ |

true |

"true" ❌ |

true ✅ |

123 |

"123" ❌ |

123 ✅ |

45.67 |

"45.67" ❌ |

45.67 ✅ |

'text' |

text (no quotes) ❌ |

"text" ✅ |

Handling Complex Cases

1. NULL vs 'NULL' String

SQL distinguishes:

INSERT INTO products (name, description)

VALUES

('Widget', NULL), -- description is NULL (not set)

('Gadget', 'NULL'); -- description is string "NULL"JSON output:

[

{ "name": "Widget", "description": null },

{ "name": "Gadget", "description": "NULL" }

]2. Strings with Quotes

SQL escapes quotes:

INSERT INTO posts (title)

VALUES ('He said, "Hello!"'); -- MySQL/PostgreSQL

VALUES ('He said, ''Hello!'''); -- Standard SQL (double single-quote)JSON output (must escape properly):

{ "title": "He said, \"Hello!\"" }3. Dates and Timestamps

SQL:

INSERT INTO events (name, created_at)

VALUES ('Launch', '2026-03-05 14:30:00');JSON (keep as ISO string):

{ "name": "Launch", "created_at": "2026-03-05T14:30:00Z" }Or convert to Unix timestamp if preferred.

4. Multi-Line Strings

SQL:

INSERT INTO articles (content)

VALUES ('Line 1

Line 2

Line 3');JSON (escape newlines):

{ "content": "Line 1\nLine 2\nLine 3" }Parsing Strategies

Regex-Based Parsing (Quick but Fragile)

Use case: Simple INSERT statements without complex strings

// JavaScript example

const sql = "INSERT INTO users (id, name) VALUES (1, 'John'), (2, 'Jane')";

const columns = sql.match(/\(([^)]+)\)/)[1].split(',').map(s => s.trim());

const valuesMatch = sql.match(/VALUES\s+(.+)/i)[1];

const rows = valuesMatch.split(/\),\s*\(/).map(row => {

row = row.replace(/^\(|\)$/g, '');

const values = row.split(/,\s*(?![^']*')/); // Split on commas outside quotes

return columns.reduce((obj, col, i) => {

obj[col] = parseValue(values[i]);

return obj;

}, {});

});

function parseValue(val) {

val = val.trim();

if (val === 'NULL') return null;

if (val === 'true' || val === 'TRUE') return true;

if (val === 'false' || val === 'FALSE') return false;

if (/^\d+$/.test(val)) return parseInt(val);

if (/^\d+\.\d+$/.test(val)) return parseFloat(val);

return val.replace(/^'|'$/g, '').replace(/''/g, "'"); // Remove quotes, unescape

}⚠️ Limitation: Breaks with complex strings (commas, quotes, newlines)

SQL Parser Library (Robust)

Recommended: Use a proper SQL parser like node-sql-parser (JavaScript) or sqlparse (Python).

// Node.js example with node-sql-parser

const { Parser } = require('node-sql-parser');

const parser = new Parser();

const sql = "INSERT INTO users (id, name) VALUES (1, 'John'), (2, 'Jane')";

const ast = parser.astify(sql);

const columns = ast.columns.map(c => c.column);

const rows = ast.values.map(row =>

columns.reduce((obj, col, i) => {

obj[col] = row.value[i].value;

return obj;

}, {})

);

console.log(JSON.stringify(rows, null, 2));Common Conversion Patterns

MySQL Dump → JSON

MySQL dumps often include:

LOCK TABLES `users` WRITE;

INSERT INTO `users` VALUES (1,'John'),(2,'Jane');

UNLOCK TABLES;Steps:

- Extract only INSERT statements (ignore LOCK/UNLOCK)

- Handle backtick-quoted identifiers

- Parse VALUES clause

PostgreSQL → MongoDB

PostgreSQL uses different quoting:

INSERT INTO users (id, data) VALUES

(1, '{"role": "admin"}'), -- JSON column

(2, ARRAY[1,2,3]); -- Array typeConversion notes:

- JSON columns: Parse the JSON string

- Arrays: Convert

ARRAY[1,2,3]→[1,2,3] - UUIDs: Keep as strings or convert to MongoDB ObjectId

Tools and Scripts

Our Online Converter

Use SQL to JSON Converter for:

- ✅ Automatic type detection

- ✅ Multi-row INSERT support

- ✅ NULL, boolean, number handling

- ✅ Client-side processing (no upload)

- ✅ Formatted JSON output

Python Script (Advanced)

import re

import json

def sql_to_json(sql):

# Extract table name

table_match = re.search(r'INSERT INTO (w+)', sql)

table = table_match.group(1) if table_match else 'data'

# Extract columns

cols_match = re.search(r'\(([^)]+)\)\s*VALUES', sql)

columns = [c.strip() for c in cols_match.group(1).split(',')]

# Extract values (simplified - use sqlparse for production)

values_match = re.search(r'VALUES\s+(.+)', sql, re.IGNORECASE | re.DOTALL)

values_str = values_match.group(1).rstrip(';')

# Parse rows (basic regex - improve for complex cases)

rows = []

for match in re.finditer(r'\(([^)]+)\)', values_str):

values = []

for v in match.group(1).split(','):

v = v.strip()

if v == 'NULL':

values.append(None)

elif v.lower() in ('true', 'false'):

values.append(v.lower() == 'true')

elif re.match(r'^\d+$', v):

values.append(int(v))

elif re.match(r'^\d+\.\d+$', v):

values.append(float(v))

else:

values.append(v.strip("'").replace("''", "'"))

rows.append(dict(zip(columns, values)))

return json.dumps(rows, indent=2)

# Usage

sql = """

INSERT INTO users (id, name, active) VALUES

(1, 'John', true),

(2, 'Jane', NULL);

"""

print(sql_to_json(sql))Best Practices

1. Preserve Original Types

- Numbers as JSON numbers (not strings)

- Booleans as

true/false - NULL as

null - Dates as ISO 8601 strings

2. Handle Special Characters

- Escape quotes in strings (

\") - Convert newlines to

\n - Handle Unicode characters properly (UTF-8)

3. Structure for Import

Match your target system:

- MongoDB: Add

_idfield if needed - PostgreSQL JSONB: Can import directly

- REST API: Wrap in

{ "data": [...] }if required

4. Large Datasets

- Process in chunks (e.g., 1000 rows at a time)

- Use streaming JSON writer (not

JSON.stringifyfor GB files) - Consider NDJSON (newline-delimited JSON) for huge datasets

Use Cases: Real-World Examples

Seeding a MongoDB Collection

// After conversion, import to MongoDB

mongoimport --db mydb --collection users --file users.json --jsonArrayCreating Test Fixtures

// fixtures/users.json (for Jest, Mocha, etc.)

module.exports = [

{ "id": 1, "name": "Test User", "email": "test@example.com" },

{ "id": 2, "name": "Admin User", "email": "admin@example.com" }

];Feeding Data to Frontend

// React component

import usersData from './data/users.json';

function UserList() {

return usersData.map(user =>

{user.name}

);

}FAQ: SQL to JSON Conversion

Can I convert SELECT results to JSON?

Yes! Many databases support JSON output directly: PostgreSQL (row_to_json()), MySQL (JSON_OBJECT()), or use tools like jq to convert CSV/TSV query results.

What about CREATE TABLE statements?

Those define schema, not data. For schema conversion, consider JSON Schema or just document the structure in comments.

How do I handle BLOB/binary data?

Convert to Base64 string: { "image": "iVBORw0KGgo..." }. Most JSON parsers handle Base64, and you can decode on the client.

Can I convert multiple INSERT statements at once?

Yes—process each INSERT separately, then combine the resulting arrays or output as NDJSON (one JSON object per line).

What's the best JSON format for large datasets?

NDJSON (newline-delimited JSON) is more efficient than a single large array—each line is a complete JSON object, allowing streaming processing.

Related Converters

- JSON to SQL — Reverse conversion

- CSV to JSON — Alternative data format

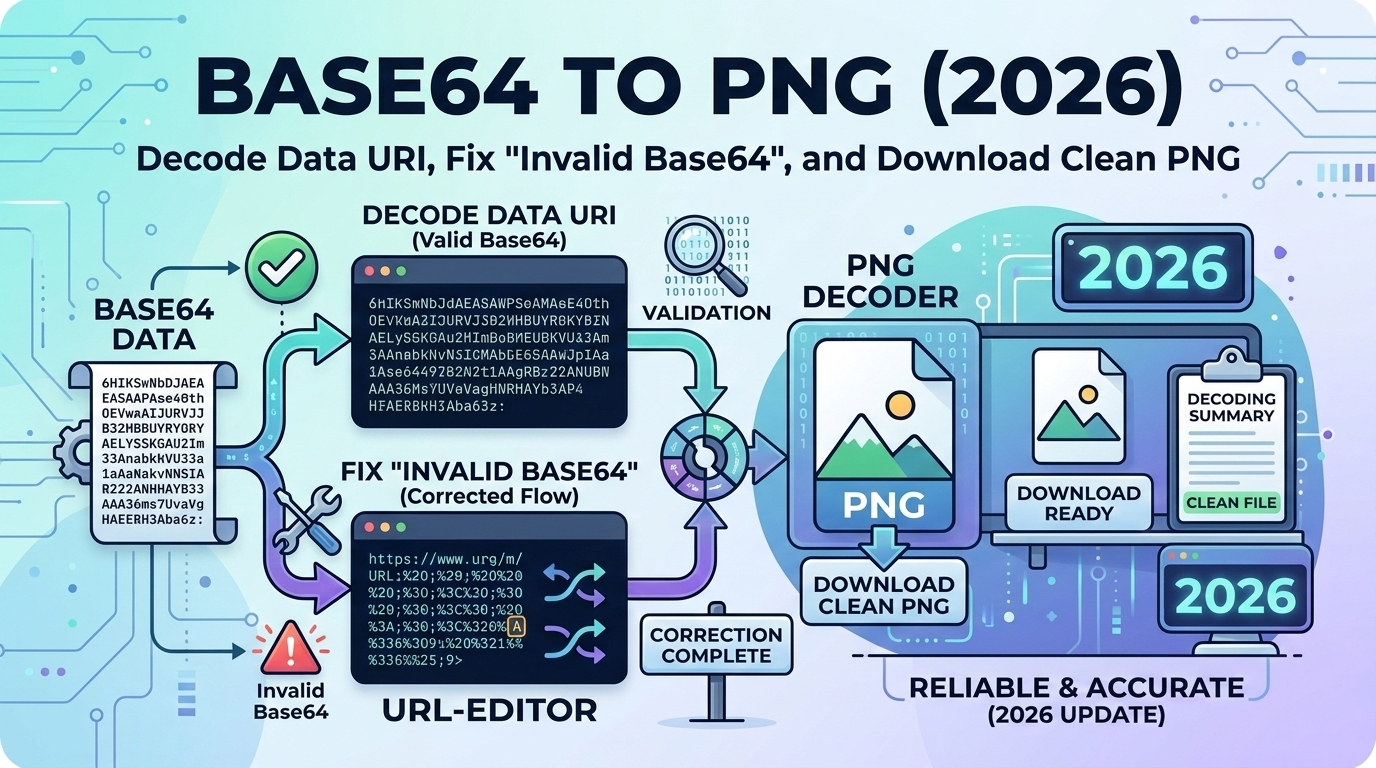

- Base64 to PNG (2026): Decode Image Data - Another essential data format conversion

- VCF to CSV (2026): Contact Data Export - Convert structured contact data

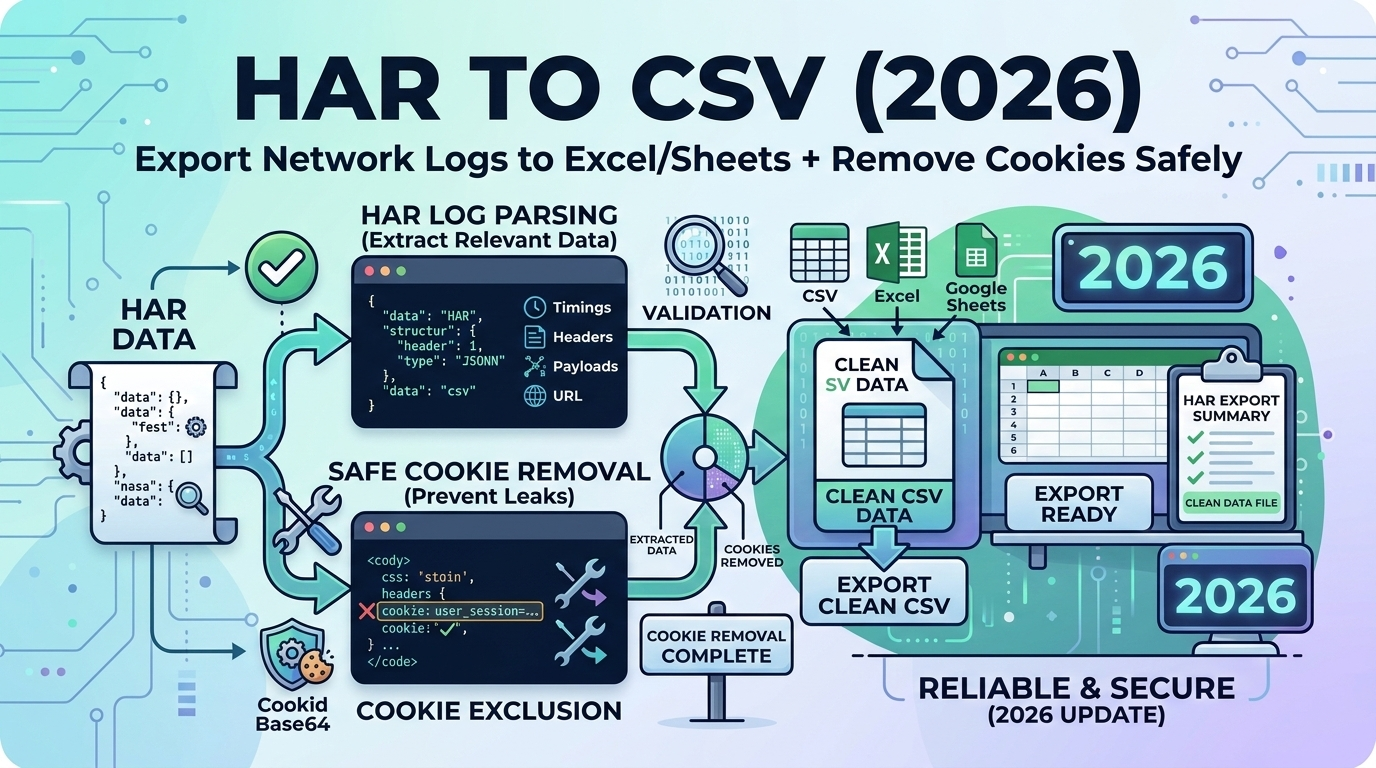

- HAR to CSV (2026): Network Log Analysis - Transform complex data for analysis

- JSON to CSV — For spreadsheet analysis

- XML to JSON — Legacy data formats

Related Articles

Conclusion

Converting SQL INSERT statements to JSON is essential for modern application development, enabling seamless data migration from relational databases to NoSQL systems, REST APIs, and JavaScript applications. The key challenges—type preservation, multi-row parsing, and special character handling—are solvable with proper parsing tools and attention to SQL syntax variations. Use our SQL to JSON Converter for instant, type-safe conversion with automatic NULL, boolean, and numeric handling. Whether seeding MongoDB, creating test fixtures, or feeding data to React/Vue applications, proper SQL to JSON conversion ensures data integrity across your entire stack.