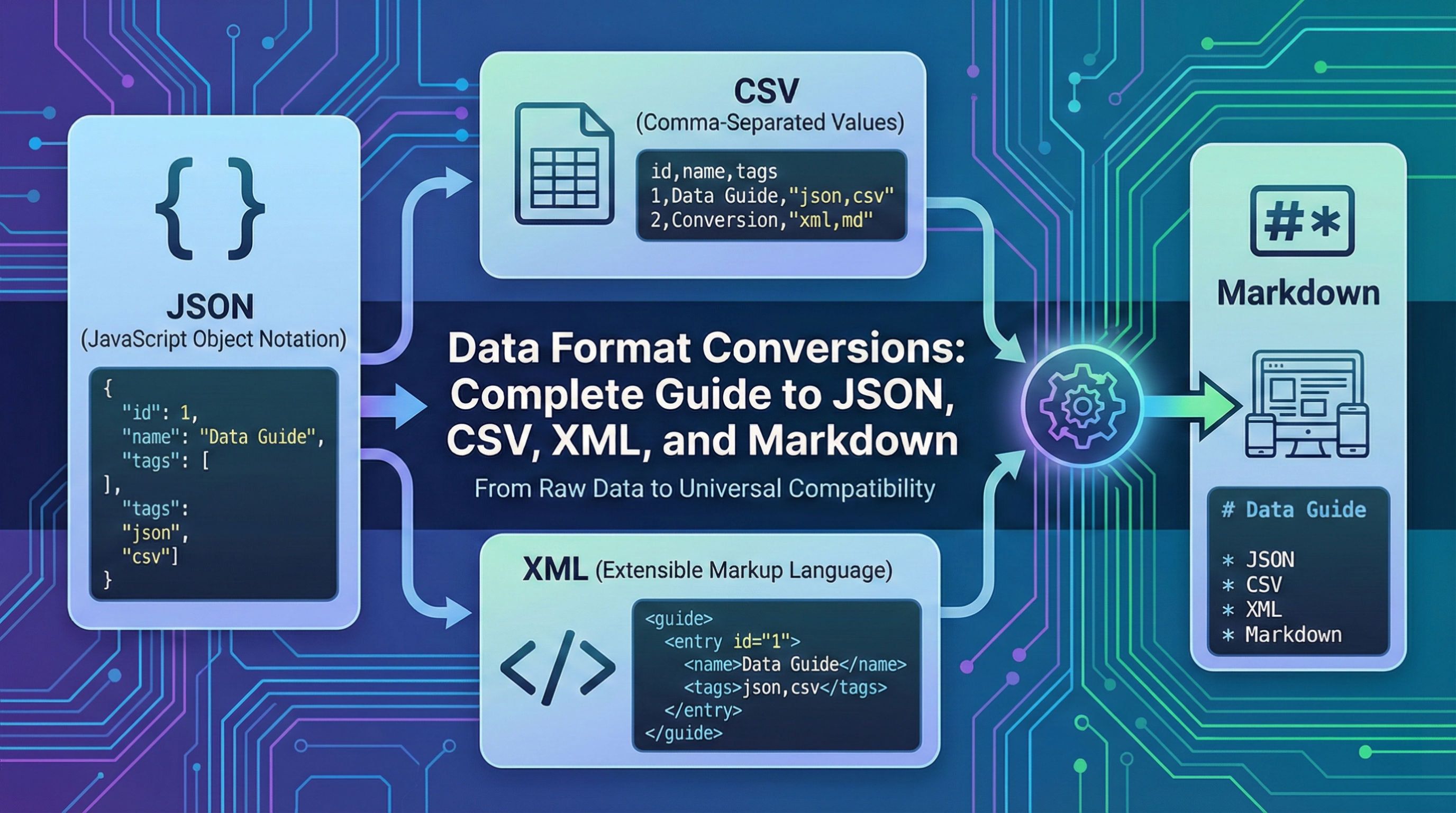

Understanding Data Formats

Data format transformations are essential for integrating systems, migrating data, and icing comity across platforms. Each format has strengths and limitations that make it suitable for specific use cases.

Format Overview

JSON (JavaScript Object Memorandum)

JSON has come the ubiquitous standard for data exchange:

- Mortal-readable: Easy to read and write

- Language-agnostic: Parsers available for every language

- Native JavaScript: Direct parsing in web operations

- Hierarchical: Supports nested structures

- Type-apprehensive: Distinguishes strings, figures, booleans, null

CSV (Comma-Separated Values)

CSV remains popular for irregular data:

- Simple format: Easy to produce and understand

- Spreadsheet compatible: Excel, Google wastes native support

- Compact: Small train sizes

- Flat structure: Two-dimensional data only

- Universal: Supported by nearly all database and analytics tools

XML (eXtensible Markup Language)

XML powers enterprise and heritage systems:

- Tone-describing: Markers explain data meaning

- Hierarchical: Complex nested structures

- Validated: Schema confirmation (XSD)

- Attributes content: Metadata alongside data

- Enterprise standard: Cleaner, RSS, SVG

Markdown

Markdown focuses on readable textbook formatting:

- Mortal-readable: Plain textbook with formatting symbols

- HTML compatible: Converts fairly to HTML

- Documentation standard: README lines, wikis, croakers

- Snippersnappy: Minimum syntax outflow

- Interpretation control friendly: Git diffs are readable

JSON Conversions

JSON to CSV

Converting hierarchical to flat structure:

Challenges:

- Nested objects: Flattening loses structure

- Arrays: Multiple rows or concatenated strings

- Mixed types: Must decide on representation

- Variable keys: Objects with different properties

Best Practices:

- Flat JSON arrays: One row per object

- Consistent keys: All objects should have same properties

- Dot notation: Represent nesting (user.name → "user.name" column)

- Array handling: Convert to JSON strings or separate rows

Example Conversion:

// JSON

[

{"name": "John", "age": 30, "city": "NYC"},

{"name": "Jane", "age": 25, "city": "LA"}

]

// CSV

name,age,city

John,30,NYC

Jane,25,LACSV to JSON

Converting flat to hierarchical structure:

Advantages:

- Type inference: Detect numbers vs strings

- Structure addition: Nest related fields

- Null handling: Explicit null representation

Considerations:

- Header row: Use as JSON keys

- Empty fields: Convert to null or empty string?

- Number detection: Parse numeric strings to numbers

- Quote handling: Preserve quoted commas

JSON to XML

Both support hierarchy but have different conventions:

Mapping Rules:

- Objects: Become XML elements

- Arrays: Repeated elements with same tag

- Primitives: Text content of elements

- Keys: Become element names (must be valid XML)

Example:

// JSON

{"user": {"name": "John", "age": 30}}

// XML

<user>

<name>John</name>

<age>30</age>

</user>XML to JSON

Converting markup to object notation:

Challenges:

- Attributes: How to represent in JSON?

- Mixed content: Text + elements combined

- Namespaces: XML feature without JSON equivalent

- Comments: Usually discarded

Common Conventions:

- Attributes: Use @ prefix (@id, @class)

- Text content: Use $ or #text key

- Single vs array: Single child → object, multiple → array

CSV Best Practices

Delimiter Selection

Choose delimiters wisely:

- Comma (,): Standard, but requires escaping commas in data

- Tab ( ): Good for data containing commas

- Pipe (|): Rarely appears in data

- Semicolon (;): European Excel default

Escaping and Quoting

Handle special characters correctly:

- Quote fields containing delimiter, newlines, or quotes

- Escape quotes by doubling them ("" inside quoted field)

- Consistent quoting: All fields quoted vs minimal quoting

Encoding

Character encoding matters:

- UTF-8: Modern standard, supports all characters

- UTF-8 with BOM: Excel compatibility for Unicode

- ISO-8859-1: Legacy systems

- Windows-1252: Excel default in some regions

Data Types

CSV has no native types:

- Numbers: No quotes (123 not "123")

- Dates: Use ISO 8601 (YYYY-MM-DD)

- Booleans: true/false or 1/0 or Y/N

- Null: Empty field or explicit "NULL"

XML Considerations

Well-Formed XML

Requirements for valid XML:

- Single root element: One top-level element

- Proper nesting: Tags must close in order

- Closed tags: All tags must close

- Case sensitive: <Name> ≠ <name>

- Attribute quotes: Values must be quoted

Special Characters

XML entities for special characters:

- < for <

- > for >

- & for &

- " for "

- ' for '

CDATA Sections

For unescaped content:

<![CDATA[

Content with < > & doesn't need escaping

]]>XML Namespaces

Avoid naming conflicts:

<root xmlns:custom="http://example.com/ns">

<custom:element>Value</custom:element>

</root>Markdown Conversions

Markdown to HTML

Most common markdown conversion:

Elements Mapping:

- # Heading → <h1>Heading</h1>

- **bold** → <strong>bold</strong>

- *italic* → <em>italic</em>

- [link](url) → <a href="url">link</a>

- - list item → <li>list item</li>

Code Blocks:

```javascript

code here

```

→

<pre><code class="language-javascript">

code here

</code></pre>HTML to Markdown

Reverse conversion challenges:

- Complex HTML: Not all HTML converts cleanly

- Styling: CSS styles lost

- Tables: Markdown tables have limitations

- Nested structures: Deep nesting simplified

Common Conversion Pitfalls

Data Loss

Conversions that lose information:

- JSON to CSV: Nested structures flattened

- XML to JSON: Attributes vs elements distinction

- Markdown to plain text: Formatting lost

- Any to CSV: Type information lost

Encoding Issues

- Character corruption: Wrong encoding detection

- BOM handling: Byte order marks causing issues

- Line endings: Windows (CRLF) vs Unix (LF)

- Special characters: Emoji, currency symbols

Type Coercion

Automatic type conversion problems:

- "01234" → 1234: Leading zeros lost

- Large numbers: Precision loss in floats

- Date detection: "1-2-3" parsed as date

- Boolean conversion: "false" string vs false boolean

Validation

JSON Validation

- JSON Schema: Define structure and types

- Linting: Check for valid JSON syntax

- Required fields: Ensure essential data present

CSV Validation

- Column count: Consistent across rows

- Header validation: Expected column names

- Data type checking: Numeric columns contain numbers

- Required fields: No empty required values

XML Validation

- Well-formed check: Proper syntax

- XSD validation: Schema compliance

- DTD validation: Document type definition

Performance Considerations

Large Files

Strategies for big data:

- Streaming parsing: Process chunks incrementally

- Generator functions: Yield rows one at a time

- Memory limits: Don't load entire file

- Progress indication: Show conversion progress

Format-Specific Speed

- CSV: Fastest to parse (simple format)

- JSON: Fast with native browser parsing

- XML: Slower (complex parsing, DOM building)

- Markdown: Fast (text processing)

Use Case Recommendations

When to Use JSON

- API responses and requests

- Configuration files

- Web application data exchange

- NoSQL database storage

- Hierarchical data structures

When to Use CSV

- Spreadsheet data import/export

- Database table dumps

- Analytics and reporting data

- Flat, tabular data

- Data sharing with non-technical users

When to Use XML

- Enterprise integration (SOAP)

- Document-centric data (DocBook)

- RSS/Atom feeds

- SVG graphics

- Legacy system compatibility

When to Use Markdown

- Documentation and README files

- Blog posts and articles

- Comments and forum posts

- Note-taking applications

- Email composition (with HTML output)

Security Considerations

Injection Attacks

- CSV injection: Formulas in CSV fields

- XML injection: XXE (XML External Entity) attacks

- JSON parsing: eval() injection risks

Sanitization

- Validate input before conversion

- Escape special characters properly

- Use safe parsing libraries

- Disable dangerous features (XML external entities)

Tools and Libraries

Browser APIs

- JSON.parse/stringify: Native JSON handling

- DOMParser: XML parsing

- TextEncoder/Decoder: Character encoding

Third-Party Libraries

- PapaParse: Powerful CSV parsing

- marked: Markdown to HTML

- xml2js: XML/JSON conversion

Testing Conversions

Test Cases

- Empty files and empty fields

- Special characters and Unicode

- Maximum size limits

- Malformed input handling

- Edge cases (nested arrays, mixed types)

Validation

- Round-trip testing (A→B→A should equal original)

- Data integrity checks

- Character encoding verification

- Performance benchmarks

Conclusion

Data format conversions are essential but require careful attention to structure, encoding, and data preservation. Understanding the strengths and limitations of each format helps you choose the right approach for your specific use case. Always validate conversions, handle edge cases properly, and test with real-world data. Whether you're building APIs, processing analytics data, or migrating between systems, mastering these format conversions ensures data integrity and smooth interoperability across platforms and applications.